|

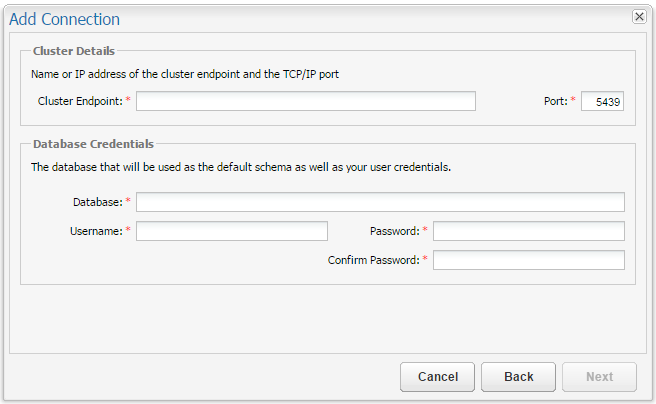

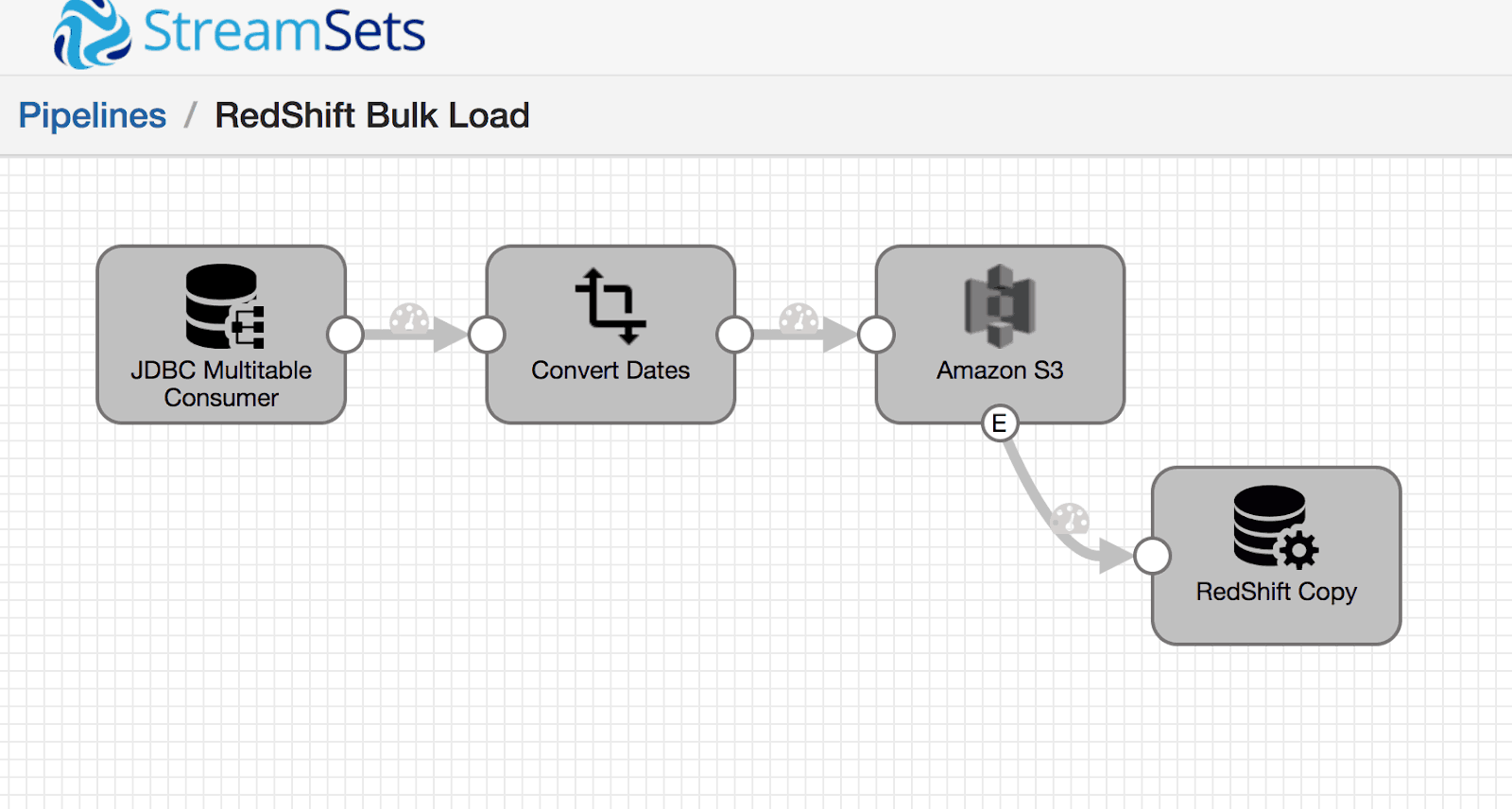

To connect to S3 and forwards those credentials to Redshift over JDBC. If true, the data source automatically discovers the credentials that Spark is using See Redshift documentation of search_path.įully specified ARN of the IAM Redshift COPY/UNLOAD operationsĪttached to the Redshift cluster, For example, arn:aws:iam::123456789000:role/. Should be a comma separated list of schema names to search for tables in. Will be set using the SET search_path to command. Should have necessary privileges for the table being referenced. Groups and/or VPC must be configured to allow access from your driver application.ĭatabase identifies a Redshift database name user and password are credentials toĪccess the database, which must be embedded in this URL for JDBC, and your user account

host and port should point to the Redshift master node, so security One Redshift-compatible driver must be on the classpath and Subprotocol can be postgresql or redshift, depending on which JDBC driver you Jdbc:subprotocol://:/database?user=&password= COPY does not support Amazon S3 server-side encryption with a customer-supplied key (SSE-C). You can use the COPY command to load data files that were uploaded to Amazon S3 using server-side encryption with AWS-managed encryption keys (SSE-S3 or SSE-KMS), client-side encryption, or both. Redshift also supports client-side encryption with a custom key (see: Unloading Encrypted Data Files) but the data source lacks the capability to specify the required symmetric key.Įncrypting COPY data stored in S3 (data stored when writing to Redshift): According to the Redshift documentation on Loading Encrypted Data Files from Amazon S3: If you want to specify custom SSL-related settings, you can follow the instructions in the Redshift documentation: Using SSL and Server Certificates in JavaĪnd JDBC Driver Configuration Options Any SSL-related options present in the JDBC url used with the data source take precedence (that is, the auto-configuration will not trigger).Įncrypting UNLOAD data stored in S3 (data stored when reading from Redshift): According to the Redshift documentation on Unloading Data to S3, “UNLOAD automatically encrypts data files using Amazon S3 server-side encryption (SSE-S3).” option("autoenablessl", "false") on your DataFrameReader or DataFrameWriter. In case there are any issues with this feature, or you simply want to disable SSL, you can call. This holds for both the Redshift and the PostgreSQL JDBC drivers. In case that fails, a pre-bundled certificate file is used as a fallback. For that, a server certificate is automatically downloaded from the Amazon servers the first time it is needed. Securing JDBC: Unless any SSL-related settings are present in the JDBC URL, the data source by default enables SSL encryption and also verifies that the Redshift server is trustworthy (that is, sslmode=verify-full). save () // Write back to a table using IAM Role based authentication df. load () // After you have applied transformations to the data, you can use // the data source API to write the data back to another table // Write back to a table df. option ( "forward_spark_s3_credentials", True ). option ( "query", "select x, count(*) group by x" ). load () // Read data from a query val df = spark. option ( "forward_spark_s3_credentials", true ).

option ( "dbtable", "schema-name.table-name" ) /* if schema-name is not specified, default to "public". option ( "port", "port" ) /* Optional - will use default port 5439 if not specified. load () // Read data from a table using Databricks Runtime 11.3 LTS and above val df = spark. Read data from a table using Databricks Runtime 10.4 LTS and below val df = spark. save () ) # Write back to a table using IAM Role based authentication ( df. load () ) # After you have applied transformations to the data, you can use # the data source API to write the data back to another table # Write back to a table ( df.

load () ) # Read data from a query df = ( spark. option ( "dbtable", "schema-name.table-name" ) # if schema-name is not specified, default to "public". option ( "port", "port" ) # Optional - will use default port 5439 if not specified. load () ) # Read data from a table using Databricks Runtime 11.3 LTS and above df = ( spark. # Read data from a table using Databricks Runtime 10.4 LTS and below df = ( spark.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed